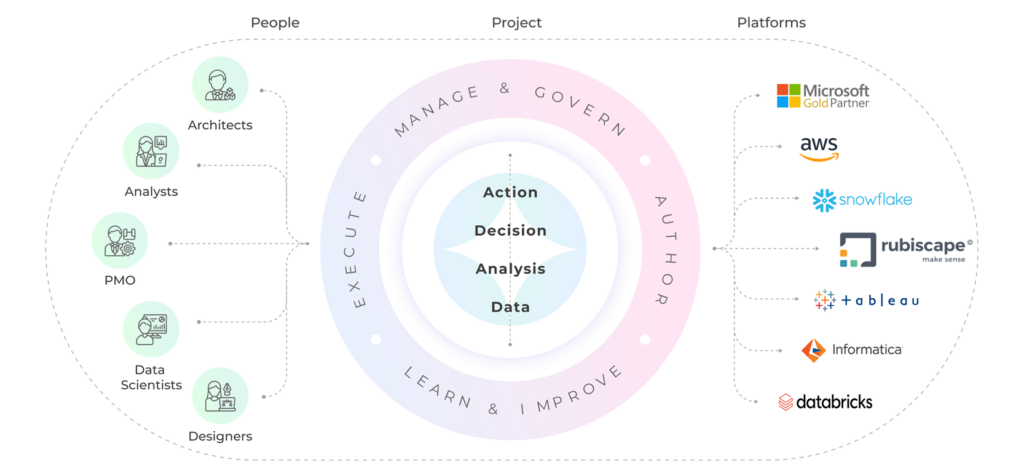

- What we do

-

- How we do

-

-

- See how global leaders have transformed their businesses with Inteliment. From driving innovation to achieving measurable growth, explore the real impact our solutions have had across industries. Your success could be next.Collaborate with Inteliment to unlock the full potential of Global Capability Centers (GCC). Leverage our expertise in data science and analytics to drive innovation, efficiency, and competitive advantage for your global operations.In a fast-paced digital world, Inteli-Labs helps organizations transform ideas into scalable solutions. By leveraging our expertise in AI, IoT, and RPA, we provide tailored strategies that address industry-specific challenges.

-

-

-

- Who we are

-

-

- Join us in driving innovation! Partnering with Inteliment gives you access to advanced technologies and expert support. Together, we’ll create impactful solutions that meet market needs and delight customers. Let’s build a better future!

-

-

-

- Careers

-

-

- At Inteliment, we’re dedicated to nurturing future talent. Our Campus Connect program bridges academia and industry, offering students hands-on experience through mentorship, workshops, and internships.We’re here to help! Whether you have questions, need support, or want to explore partnership opportunities, we’d love to hear from you. Reach out to our team, and let’s start a conversation about how we can work together to achieve your goals!

-

-

-

Global

Global

India

India

Australia

Australia